Jointly Learning Heterogeneous Features

for RGB-D Activity Recognition

Jian-Fang Hu1, Wei-Shi Zheng1, Jianhuang Lai1,and Jianguo Zhang 2

1Sun Yat-sen University, China 2University of Dundee, United Kingdom

Introduction

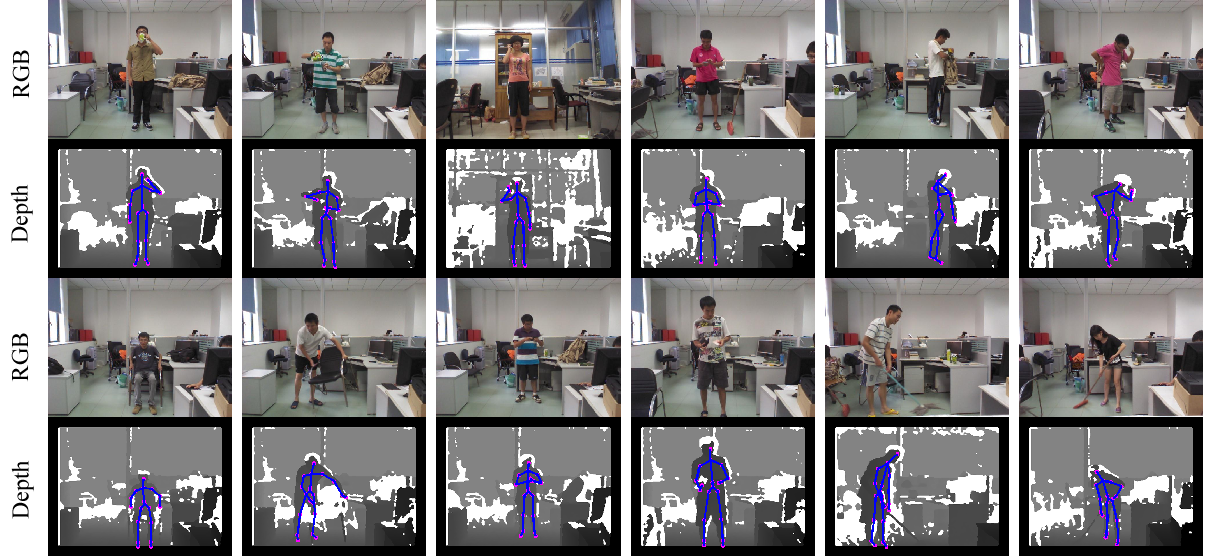

We focus on heterogeneous feature learning for RGB-D activity recognition. Considering that features from different channels could share some similar hidden structures, we propose a joint learning model to simultaneously explore the shared and feature-specific components as an instance of heterogeneous multi-task learning. The proposed model in an unified framework is capable of: 1) jointly mining a set of subspaces with the same dimensionality to enable the multi-task classifier learning, and 2) meanwhile, quantifying the shared and feature-specific components of features in the subspaces. To efficiently train the joint model, a three-step iterative optimization algorithm is proposed, followed by two inference models. Extensive results on three activity datasets have demonstrated the efficacy of the proposed method. In addition, a novel RGB-D activity dataset focusing on human-object interaction is collected for evaluating the proposed method, which will be made available to the community for RGB-D activity benchmarking and analysis. |

Download

Please download the dataset and codes using the links below:

SYSU 3D HOI Set (480 video clips of 12 HOI activity classes, 5.5 G): baidu yun (code: kjoy ); OneDrive (code: sysu)

Demo: demoJOULE.wmv

Codes for feature extraction(DS, DCP and DDP descriptors, 320 M) : baidu yun (code: y524); OneDrive (code: sysu)

Codes for JOULE model: JOULE

Cite

| Please cite the following papers if you use the data and codes in your research: [1], Jian-Fang Hu, Wei-Shi Zheng, Jianhuang Lai, Jianguo Zhang, “Jointly Learning Heterogeneous Features for RGB-D Activity Recognition”, IEEE Transaction on Pattern Analysis and Machine Intelligence, Vol.39 (11), 2017, pp.2186-2200. PDF BibTeX |